The rapid growth of generative AI tools has raised an important question across education, publishing, and digital content: Are AI detectors accurate when identifying AI-generated text? As AI writing systems become more advanced, many organizations rely on detection tools to distinguish between human-written and machine-generated content.

In practice, evaluating AI detection accuracy is more complex than a single percentage value. Researchers typically assess detectors using multiple metrics, datasets, and testing environments. Because of this, reported results can vary significantly depending on the methodology used. This variation explains why discussions around how accurate AI detectors are often produce different conclusions.

Accuracy rates for AI detectors vary significantly depending on the dataset, text type, and evaluation methodology. The following analysis aggregates results reported across different academic studies and benchmark tests.

The accuracy values discussed in this article represent reported results from different evaluations rather than a single standardized benchmark.

In the sections below, we examine empirical evidence on AI detection accuracy, including results from academic research, comparisons between major detection tools, and error rates such as false positives and false negatives. This analysis helps clarify whether AI detectors are accurate in real-world scenarios and how performance varies across detectors, datasets, and text types.

After discussing the general concept of AI detection accuracy, it is useful to examine empirical evidence from academic studies that measured how well AI detectors identify AI-generated text.

AI detection accuracy across academic studies

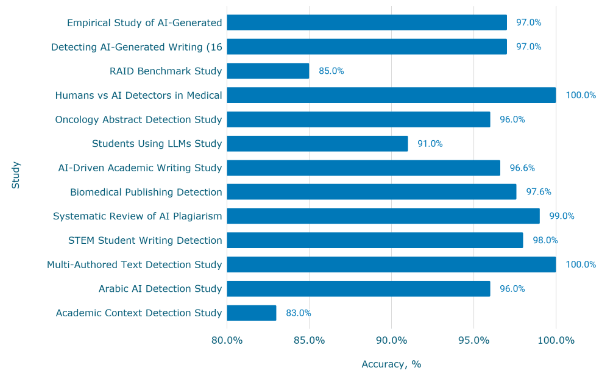

The chart below summarizes results reported in multiple peer-reviewed and benchmark studies evaluating AI detection accuracy across different datasets and research contexts. These studies address the common question of whether AI detectors are accurate by measuring how reliably detection tools distinguish between AI-generated and human-written text.

Accuracy rates for AI detectors vary significantly depending on the dataset, text type, and evaluation methodology. The following comparison aggregates results reported across different academic studies and benchmark tests.

Overall, the results provide a data-driven overview for readers, asking how accurate AI detectors are and whether current systems can consistently identify AI-generated content.

- The highest reported AI detection accuracy reached 100% in both the Humans vs AI Detectors in Medical Writing study and the Multi-Authored Text Detection Study.

- The lowest accuracy in the dataset was 83% in the Academic Context Detection Study, showing that results can vary significantly depending on the testing environment.

- Most studies reported accuracy between 96% and 99%, including 97.6% in the Biomedical Publishing Detection Study and 98% in the STEM Student Writing Detection Study.

How accurate are AI detectors? Evidence from academic research

Across the studies analyzed, most reported accuracy values fall within the 90–100% range, indicating that modern AI detection tools can achieve relatively high performance in controlled research settings. At the same time, the variation between 83% and 100% accuracy demonstrates that the effectiveness of these systems depends heavily on the dataset, text domain, and evaluation methodology.

These findings help answer questions such as how accurate AI is in identifying AI-generated text and whether AI detectors are reliable in real-world scenarios. While the data show that many systems perform well on academic benchmarks, variation across studies suggests that AI detection results should be interpreted in context rather than treated as a single, universal accuracy score.

While academic studies provide a general overview of AI detection accuracy, a closer comparison of individual tools helps answer practical questions, such as whether Turnitin AI detector is accurate and how its performance compares with other AI detection systems.

Turnitin AI detection accuracy compared to other AI detectors

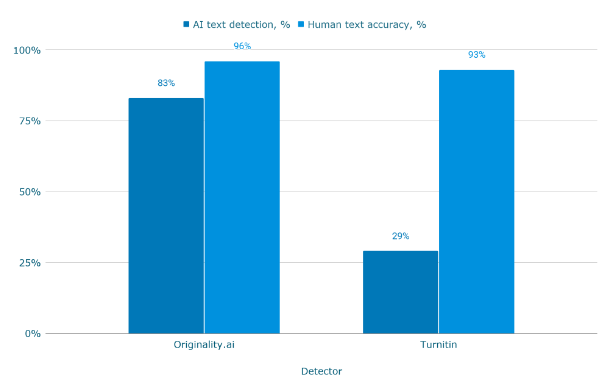

The chart below compares the detection performance of two AI detectors across two metrics: the ability to correctly identify AI-generated text and the accuracy of classifying human-written content.

This comparison helps address common questions such as how accurate the Turnitin AI detector is and whether its performance aligns with the reported Turnitin AI detection accuracy in academic evaluations. The results come from a study that tested both detectors on datasets containing AI-generated, human-written, and hybrid texts.

Accuracy rates for AI detectors vary depending on the dataset, text type, and evaluation methodology. The following comparison reflects results reported in a specific study rather than a universal benchmark.

- Originality.ai detected AI-generated text with 83% accuracy, compared to 29% for Turnitin in the same evaluation.

- Turnitin correctly identified human-written text in 93% of cases, slightly lower than Originality.ai at 96%.

- The difference in AI detection capability between the two systems reached 54 percentage points (83% vs 29%) in this dataset.

How accurate is Turnitin AI detector compared to other tools?

The comparison highlights how results can vary significantly between AI detection tools when evaluating AI-generated text. In this dataset, the reported Turnitin AI detection accuracy for identifying AI content was considerably lower than that of the alternative system, even though both tools demonstrated relatively high accuracy when classifying human-written text.

These findings contribute to ongoing discussions about whether the Turnitin AI detector is accurate and illustrate why reported Turnitin AI detection accuracy should be interpreted within the context of specific testing conditions. Overall, the data suggest that detection performance depends not only on the tool itself but also on the dataset and evaluation methodology used in the study.

After examining Turnitin AI detection accuracy in comparison with another detector, the next step is to review how several major AI detection tools perform side by side.

Accuracy comparison of major AI detectors

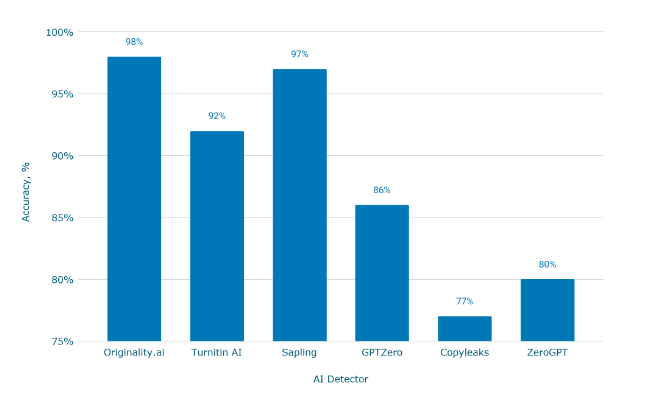

The chart below compares the reported AI detection accuracy of several widely used AI detection tools. This comparison helps address common questions, such as what is the most accurate AI detector and how accurate are AI detectors when evaluated across different platforms.

Accuracy rates for AI detectors vary significantly depending on the dataset, text type, and evaluation methodology. The following comparison aggregates results reported across different academic studies and benchmark tests.

Because of these differences, the chart should be interpreted as a comparative overview rather than a definitive ranking of detector performance.

- Originality.ai shows the highest reported accuracy at 98%, followed closely by Sapling at 97%.

- Turnitin AI reports an accuracy of 92%, placing it between the top-performing detectors and lower-performing tools.

- Copyleaks and ZeroGPT show lower accuracy levels at 77% and 80%, while GPTZero reports 86% accuracy in the evaluated results.

What is the most accurate AI detector? Accuracy comparison across tools

The comparison illustrates that reported AI detection accuracy can differ considerably between AI detectors. While some tools report accuracy levels above 95%, others show performance closer to 77-86%, depending on the evaluation.

These differences help explain why questions such as what is the most accurate AI detector remain open to interpretation. Since detection results depend on datasets, evaluation methods, and the types of text being analyzed, reported accuracy values should be viewed as comparative indicators rather than fixed performance guarantees.

Beyond comparing individual detectors, another important factor affecting AI detection accuracy is the type of AI model that generated the text.

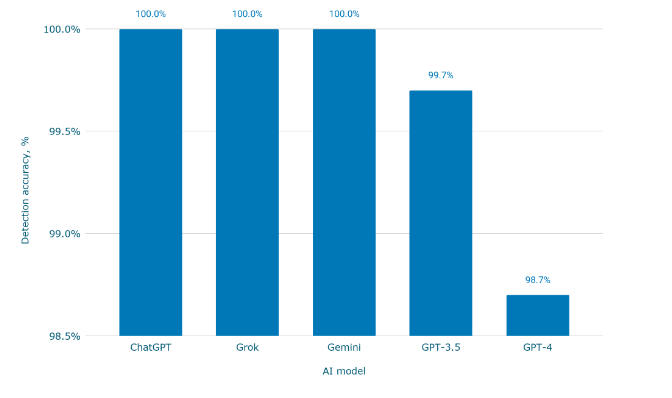

AI detection accuracy by LLM source

The chart below shows how accurately AI detection systems identify text generated by different large language models (LLMs). Evaluating detection performance across multiple models helps address broader questions, such as how accurate AI is when identifying AI-generated text from different sources.

Reported accuracy can vary depending on the dataset, the structure of the text, and the evaluation methodology used in each study. As a result, detection performance may differ when analyzing outputs from different AI models.

The values in the chart represent reported results from specific evaluations rather than a single standardized benchmark.

Understanding these differences helps explain why discussions about whether AI detectors are accurate often depend on the AI model being tested.

- AI-generated text from ChatGPT, Grok, and Gemini was detected with 100.0% accuracy in the evaluated tests.

- Detection accuracy for GPT-3.5 reached 99.7%, indicating near-perfect identification of AI-generated content.

- GPT-4 showed slightly lower detection accuracy at 98.7%, though it still remained above the 98% level.

How detection accuracy varies across different AI models

The results suggest that AI detection systems can achieve high accuracy when identifying text generated by major LLM platforms. In the dataset analyzed, detection accuracy ranged from 98.7% to 100% depending on the AI model.

These findings provide additional context for questions such as whether AI detectors are accurate and how effectively detection systems distinguish AI-generated content. While the reported results demonstrate strong detection capabilities across several major LLMs, variations across datasets and evaluation methods mean that real-world performance may differ depending on the specific text source being analyzed.

In addition to the AI model that generates the text, another factor that influences AI detection accuracy is the type of content being analyzed.

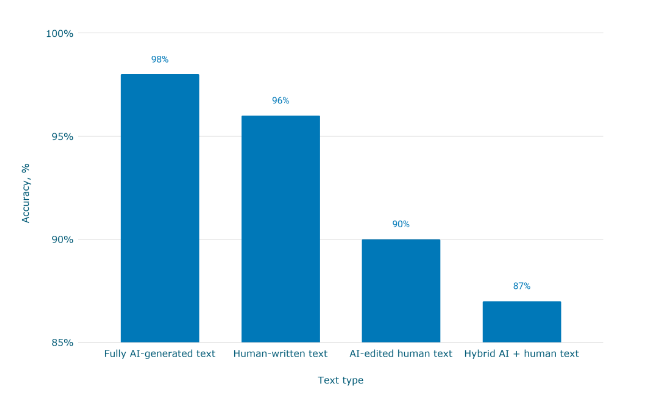

Detection accuracy by text type

The chart below compares detection performance across different text categories, including fully AI-generated content, human-written text, AI-edited writing, and hybrid AI–human content.

These distinctions are important when evaluating how accurate AI detectors are, because the level of AI involvement in the text can significantly affect detection results. For example, identifying fully AI-generated text is typically easier than detecting partially edited or hybrid content.

Accuracy rates for AI detectors vary depending on the dataset, text structure, and evaluation methodology. The values shown in the chart reflect results reported in a specific evaluation rather than a universal benchmark.

Understanding these differences also helps explain ongoing discussions about whether AI detectors are reliable when analyzing mixed or partially AI-assisted writing.

- Detection accuracy reached 98% for fully AI-generated text, representing the highest performance among the evaluated text types.

- AI detectors correctly classified 96% of human-written texts, indicating a relatively low rate of false positives in this dataset.

- Accuracy dropped to 90% for AI-edited human text and 87% for hybrid AI–human content, showing that mixed authorship is more difficult to detect.

How text type affects AI detection accuracy

The results show that AI detection accuracy varies depending on how the content was created. Systems perform best when analyzing fully AI-generated text, where detection accuracy reached 98%, while mixed or partially edited content presents greater challenges.

These findings provide additional context for questions such as how accurate AI detectors are and whether AI detectors are reliable when evaluating real-world writing. As the use of AI-assisted editing tools increases, distinguishing between human-written, AI-edited, and hybrid content may become a key factor influencing the performance of AI detection systems.

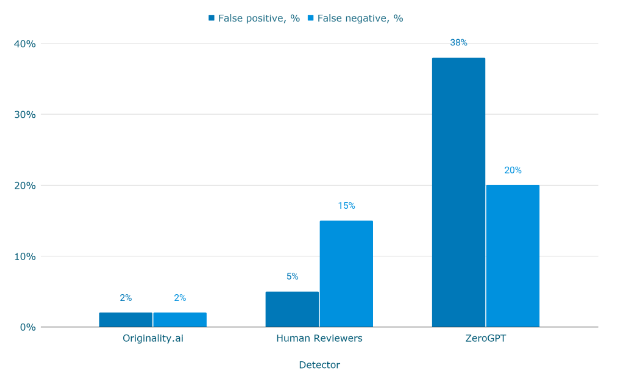

While accuracy metrics provide an overall view of AI detection accuracy, evaluating false positives and false negatives helps answer another important question: can AI detectors be wrong?

False positive and false negative rates in AI detection

The chart below compares the error rates of different evaluators, including automated AI detectors and human reviewers. These results are typically reported in research evaluating AI detector paper and AI checker paper methodologies, where confusion matrix metrics such as false positives and false negatives are used to measure reliability.

False positives occur when human-written text is incorrectly identified as AI-generated, while false negatives occur when AI-generated content is classified as human-written. Both types of errors influence whether AI detectors are reliable in real-world applications.

Accuracy rates for AI detectors vary depending on the dataset and evaluation method. The values presented below reflect results reported in specific studies rather than a single standardized benchmark.

- Originality.ai recorded the lowest error rates with 2% false positives and 2% false negatives in the evaluated dataset.

- Human reviewers showed a higher false negative rate of 15%, meaning AI-generated text was missed in 15% of cases.

- ZeroGPT demonstrated the highest error rates with 38% false positives and 20% false negatives in the same evaluation.

Can AI detectors be wrong? Error rates in AI detection systems

The data illustrates that evaluating AI detection accuracy requires more than a single accuracy percentage. False positives and false negatives provide additional insight into how detection systems perform when classifying both AI-generated and human-written text.

In this dataset, automated detectors showed error rates ranging from 2% to 38%, while human reviewers demonstrated a 15% false negative rate. These differences help explain why discussions about whether AI detectors can be wrong remain relevant in academic and technical research.

Overall, results reported in multiple AI detector papers and AI checker paper studies suggest that both automated tools and human reviewers can make classification errors, reinforcing the importance of interpreting AI detection results within the context of specific datasets and evaluation methods.

After examining detection accuracy, dataset variation, and error rates, it is useful to review how different AI detection tools compare overall in reported accuracy.

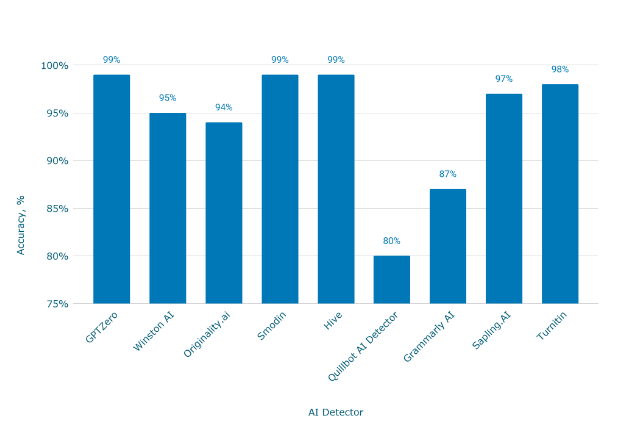

Most accurate AI content detectors

The chart below compares several widely used AI detection tools based on reported accuracy values from different datasets and evaluations. This comparison helps address the common question of what is the most accurate AI detector by summarizing performance indicators reported across different tools.

Accuracy rates for AI detectors vary significantly depending on the dataset, text type, and evaluation methodology. The following comparison aggregates results reported across different evaluations and benchmark tests.

Because of these methodological differences, comparisons should be interpreted as a general overview rather than a definitive ranking of detector performance.

- GPTZero, Smodin, and Hive report the highest accuracy levels at 99%, according to the available data.

- Turnitin reports 98% accuracy, while Sapling.AI shows 97% accuracy with relatively low false positive rates.

- Lower accuracy values appear for some tools, including Quillbot AI Detector at 80% and Grammarly AI Detector at 87%.

What is the most accurate AI detector? Accuracy comparison of leading tools

The comparison shows that reported AI detection accuracy varies substantially across AI detection platforms. Some systems report accuracy levels close to 99%, while others operate closer to the 80-90% range, depending on the evaluation conditions.

These differences help explain why questions such as what is the most accurate AI detector remain difficult to answer definitively. Detection performance depends not only on the tool itself but also on the dataset, testing methodology, and the type of content being analyzed.

As a result, comparisons between detectors should be interpreted within the context of reported studies rather than treated as fixed performance guarantees across all use cases.

Conclusions

- The available data on AI detection accuracy indicates that modern AI detection systems can achieve relatively high performance in controlled research settings. Across the academic studies analyzed, reported accuracy values ranged from 83% to 100%, with most studies reporting results between 96% and 99%, suggesting that many AI detectors are capable of reliably identifying AI-generated text under specific testing conditions.

- Comparisons between individual tools show that AI detection accuracy varies substantially across detection platforms. In the evaluated datasets, reported accuracy ranged from 77% for Copyleaks to 98% for Originality.ai, while Turnitin AI reported 92% accuracy and GPTZero reported 86% accuracy, indicating that different tools can produce noticeably different results.

- Additional analysis shows that Turnitin AI detection accuracy may vary depending on the dataset and evaluation method. In the dataset examined in this article, Turnitin detected AI-generated text with 29% accuracy, compared with 83% for Originality.ai, while both tools demonstrated relatively high accuracy when identifying human-written text (93% and 96% respectively).

- Detection performance also varies depending on the AI model that generated the text. In the evaluated results, AI detectors identified text produced by ChatGPT, Grok, and Gemini with 100% accuracy, while slightly lower detection rates were reported for GPT-3.5 (99.7%) and GPT-4 (98.7%), demonstrating that detection results can differ depending on the LLM source.

- The analysis further shows that text structure and authorship type influence detection performance. Detection accuracy reached 98% for fully AI-generated text, 96% for human-written text, 90% for AI-edited human content, and 87% for hybrid AI–human writing, indicating that mixed or partially AI-assisted content can be more difficult for detectors to classify correctly.

- Error rate analysis indicates that both automated systems and human evaluators can produce classification errors. In the dataset analyzed, false positive rates ranged from 2% to 38%, while false negative rates ranged from 2% to 20%, and human reviewers demonstrated a 15% false negative rate, illustrating that AI detection results are not error-free.

- Overall, the findings suggest that AI detection accuracy depends strongly on the dataset, evaluation methodology, AI model source, and text type being analyzed. As a result, reported accuracy values should be interpreted as context-dependent results rather than universal performance guarantees when evaluating whether AI detectors can reliably identify AI-generated content.

Sources

- Barlow, Written. “9 Best AI Detectors With The Highest Accuracy in 2026.” AI Detection Resources | GPTZero, 2 Jan. 2026, https://gptzero.me/news/best-ai-detectors/. Accessed 16 March 2026.

- Gillham, Jonathan. “AI Detection Accuracy Studies – Meta-Analysis of 13 Studies – Originality.AI.” Originality.AI, https://originality.ai/blog/ai-detection-studies-round-up. Accessed 16 March 2026.

- “We Have 99% Accuracy in Detecting AI: Originality.Ai Study – Originality.AI.” Originality.AI, https://originality.ai/blog/ai-accuracy. Accessed 16 March 2026.